At Coursebox, protecting your data and using AI responsibly are core to how our platform operates. As an AI-powered learning system, we are committed to strong data privacy, security, transparency, and ethical AI practices.

This page explains how Coursebox safeguards course content and learner data, governs the use of AI, and meets global security and compliance standards—so you can use AI-powered features with confidence and trust.

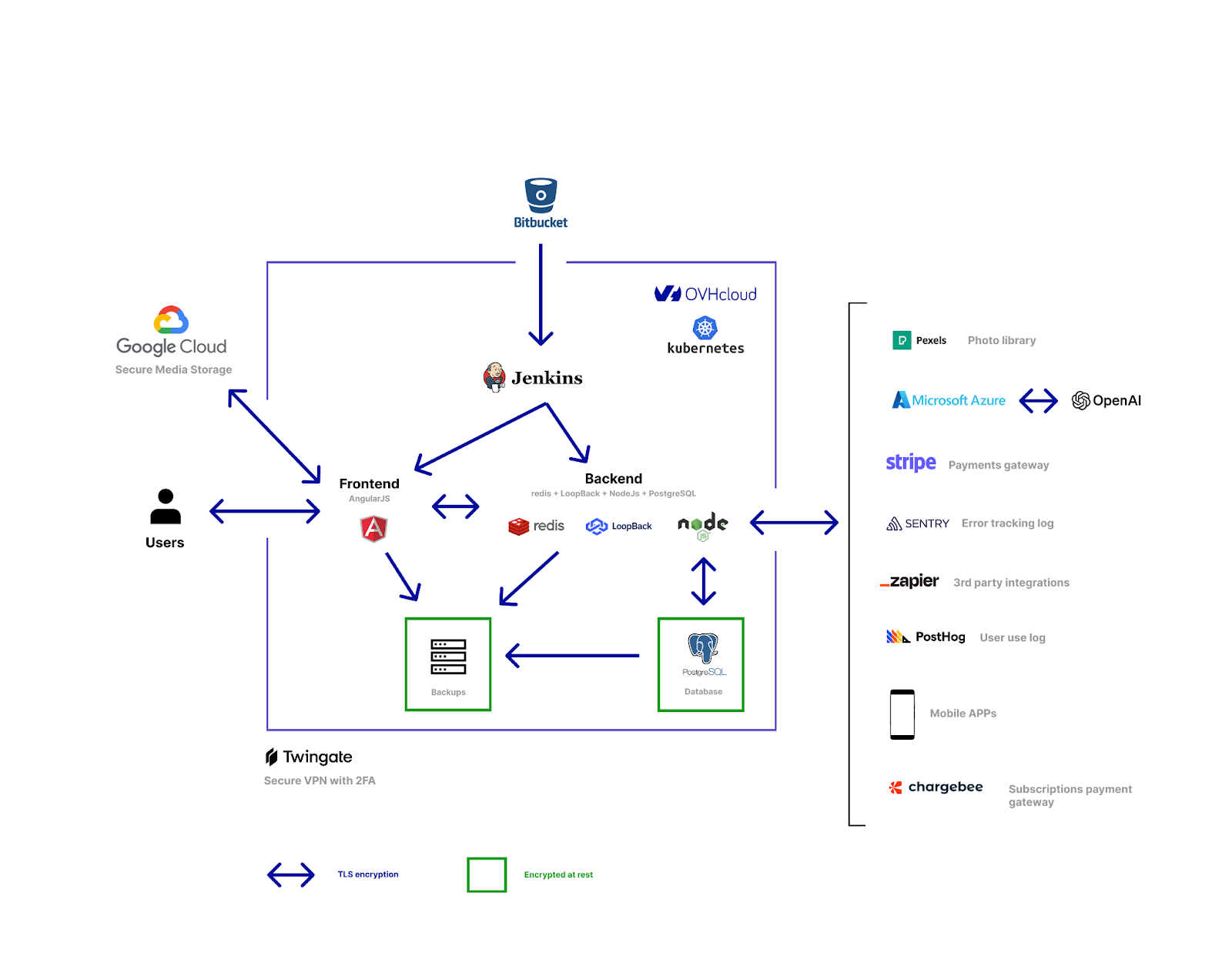

Platform architecture

For questions or further information, please contact us at support@coursebox.ai.

Securing AI with Azure OpenAI

At Coursebox, protecting client data and ensuring platform security are top priorities. In June 2024, we transitioned to Microsoft’s Azure OpenAI Service to deliver a safer, more compliant environment for all AI-powered features within the platform.

This move was driven by:

- Client concerns about data privacy and sensitive information handling.

- The need for greater transparency and control over how AI models operate.

- A commitment to meeting enterprise-level security and compliance standards.

Why Azure OpenAI?

Unlike the public OpenAI API, which processes data through shared infrastructure, Azure OpenAI Service provides:

- Enterprise-Grade Security

- Hosted within Microsoft Azure’s trusted cloud infrastructure.

- Built-in protections against data leakage and unauthorised access.

- Data Privacy & Compliance

- GDPR-aligned data storage and handling.

- Greater assurance for clients in regulated industries.

- Transparency & Control

- Clearer visibility into how AI models manage sensitive or copyrighted content.

- Options for organisations to set boundaries around AI usage.

- Reliability & Performance

- Backed by Azure’s global datacentres with high uptime and resilience.

- Scalable infrastructure to support growing enterprise needs.

What This Means for You

By moving to Azure OpenAI, Coursebox ensures that:

- Your course content, learner data, and proprietary materials are handled with stricter safeguards.

- AI-powered features (such as AI Writer, AI Tutor, and AI Video) operate in a controlled, secure environment.

- Organisations gain confidence and peace of mind knowing their data is not used to train public AI models.

Coursebox Data Privacy

Over the last year, some of our clients have asked us if OpenAI retrains based on their documents and data uploaded via OpenAI's Davinci or ChatGPT interface at Coursebox. While OpenAI has stated that they do not use data submitted by customers via the API to train their models unless customers explicitly opt-in, we decided to move to Azure's OpenAI service in June 2024 to provide additional peace of mind about how your data will be used.

Navigating Data Privacy with AI: OpenAI vs Azure OpenAI Service

As artificial intelligence (AI) continues to advance, concerns around data privacy and ownership have become increasingly prevalent. Two major players in the AI space, OpenAI and Microsoft's Azure, offer different approaches to handling customer data, each with its own set of implications. In this article, we'll explore the key differences between using OpenAI's API and Azure's OpenAI Service, with a particular emphasis on data privacy and usage.

The OpenAI Approach

OpenAI, a renowned AI research company, offers an API that allows developers to access and integrate their language models into various applications. OpenAI has measures in place to protect user privacy and data, as outlined in their privacy policy. They have also clarified that they do not use data submitted by customers via the API to train or improve their models unless customers opt-in. This provides a degree of assurance for businesses concerned about data privacy.

The key points are:

- OpenAI does not use your API data to train its models by default.

- If you want to opt-in and allow OpenAI to use your data for model improvement, you can do so explicitly.

- There are data retention policies in place, with most endpoints having a 30-day default data retention period after which the data is deleted, unless you choose otherwise.

- For sensitive applications, zero data retention options are available where request/response data is not persisted at all.

Note: Your documents and data uploaded through the Davinci API are kept private and not used for training OpenAI's models, maintaining your data privacy, unless you proactively choose to share the data.

The Azure OpenAI Service Approach

On the other hand, Microsoft's Azure OpenAI Service takes a different approach, offering greater control and assurance over data usage and privacy. This service allows you to create and manage your own fine-tuned models based on OpenAI's base models. Crucially, this means that you can fine-tune the model using your company's proprietary data, and the resulting fine-tuned model will be specific to your organisation.

Advantages of Azure OpenAI Service:

- Data Privacy and Ownership: Azure provides assurances that your data will not be used for any other purpose or shared with third parties, including OpenAI. This level of data privacy and ownership is a significant advantage for businesses and organisations that handle sensitive or copyrighted information.

- Control over Data: By using Azure's OpenAI Service, you can ensure that your copyrighted content is used solely for serving your paid learners, customers, or internal stakeholders. The fine-tuned model created on Azure will be specific to your data and will not be shared or used to train OpenAI's publicly available models.

- Customisation: The Azure service allows you to create and manage your own fine-tuned models, offering greater customisation tailored specifically to your organisational needs.

- Data Retention: Azure's terms of service explicitly state that customer data will not be accessed or used for any other purpose, addressing any potential concerns about data ownership and privacy.

Why Azure May Be Better Than OpenAI API:

- Increased Data Security: Azure’s data handling policies provide a higher level of security, ensuring that your data remains within your control and is not used to train external models.

- Custom Models: The ability to fine-tune models with your own data allows for more accurate and relevant AI solutions tailored to your specific business requirements.

- Peace of Mind: For many organisations, data ownership and privacy are paramount. Azure’s clear policies on data usage offer peace of mind that your sensitive information will not be exploited or shared without your consent.

For many organisations, data ownership and privacy are paramount. Our clients own the copyright to the content available on their instance of the Coursebox LMS, and they understandably want to ensure that this data is not used by OpenAI to train its models and potentially share their copyrighted knowledge with others who are not their paid customers.

By using Azure's OpenAI Service, we can address these concerns head-on. The fine-tuned model created on Azure will be specific to client data and will not be shared or used to train OpenAI's publicly available models. Azure's terms of service explicitly state that customer data will not be accessed or used for any other purpose, addressing any potential concerns about data ownership and privacy.

The Choice: Convenience vs. Control

Ultimately, the decision between using OpenAI's API or Azure's OpenAI Service boils down to a trade-off between convenience and control. OpenAI's API offers a more straightforward and potentially easier integration process, but Azure's OpenAI Service requires more upfront work in terms of fine-tuning the model with your data, but it provides greater control and assurance over data ownership and privacy. This approach may be more suitable for organisations that handle sensitive or copyrighted information and prioritise data privacy and ownership.

As AI continues to evolve and become more integrated into various industries, the issue of data privacy and ownership will only become more critical. By understanding the differences between OpenAI's API and Azure's OpenAI Service, organisations can make informed decisions that align with their data privacy and ownership priorities.

References

- LinkedIn (n.d.) OpenAI API Reference - Superyacht CRM. Available at: LinkedIn

- Microsoft (2023) What is Azure OpenAI Service? Available at: Microsoft

- OpenAI (2023a) Privacy Policy. Available at: OpenAI

- OpenAI (2023b) How your data is used to improve model performance. Available at: OpenAI

- OpenAI (2023c) Data usage for consumer services FAQ. Available at: OpenAI Help

Coursebox General Data Protection Regulation (GDPR) Compliance

Coursebox is committed to protecting the privacy and security of our clients and their learners. As an AI-powered learning platform serving clients globally, we adhere to the General Data Protection Regulation (GDPR), ensuring that all personal

data of individuals in the European Union (EU), European Economic Area (EEA), and other applicable jurisdictions is handled lawfully, fairly, and transparently.

Coursebox Pty Ltd has been formally assessed and certified for GDPR compliance by American Quality Standards Registrars (AQSR), accredited by the United States Accreditation Council (USAC).

Certificate Details:

- Certificate Number: 17412

- Date of Registration: 11 June 2025

- Expiry Date: 10 June 2026

- Re-certification Date: 10 June 2028

- Scope: AI-powered learning platform enabling rapid course creation, corporate training, and vocational education solutions, including automation of assessments, tutoring, and content generation.

What GDPR Compliance Means for Clients

1. Data Protection & Privacy Rights

Coursebox upholds the fundamental rights of individuals under GDPR, including access, rectification, erasure ('right to be forgotten'), restriction or objection to processing, and data portability. Clients and their learners can request access to,

correction of, or deletion of their data at any time.

2. Lawful Data Processing

All personal data collected and processed by Coursebox is based on lawful grounds: contractual necessity, legitimate interests, or consent.

3. Data Security & Safeguards

Coursebox employs industry best practices aligned with SOC 2 and ISO 27001 standards, including encryption, penetration testing, access controls, and secure hosting environments.

4. Data Transfers Outside the EU

Where personal data is transferred outside the EU/EEA, adequate safeguards are ensured through Standard Contractual Clauses (SCCs) and GDPR-compliant cloud providers.

5. Annual Assessments & Certification

Our GDPR certification is valid for three years, subject to annual assessments to ensure ongoing compliance.

What Clients Need to Know

- For EU and EEA Clients: All data processing activities fully align with GDPR requirements, and Coursebox’s certification may be cited in compliance reporting.

- For Clients in Other Regions: Even if GDPR is not legally required, Coursebox applies the same high standards globally.

- Support Requests: Learners or administrators may exercise GDPR rights via Coursebox Support.

How to Verify Our GDPR Certification

Clients can verify the authenticity of Coursebox’s GDPR certificate via the AQSR portal at www.aqsrworld.com using Certificate Number 17412.

In summary: Coursebox is fully GDPR-compliant and certified. We take data protection seriously and provide transparency, security, and control over personal data for all clients, whether in the EU or beyond.

Hosting with Security

At Coursebox, we take hosting and security seriously. Our infrastructure is designed to protect your data, provide reliable performance, and meet compliance needs for organisations of all sizes.

Default Hosting — OVH France

By default, every Coursebox portal is securely hosted on OVH France, a leading European cloud provider.

Why OVH France?

Security first: ISO/IEC 27001 certified data centres.

Compliance: Meets GDPR and EU data protection regulations.

Reliability: Redundant infrastructure and daily backups (14 days by default).

Performance: Optimised servers for high availability and scalable learning delivery.

This setup ensures a strong, compliant foundation for your learning platform.

Add-On Hosting for Business & Enterprise Clients

We understand that some organisations have specific hosting policies or geographic requirements. That’s why Business and Enterprise clients can choose Google Cloud hosting as an add-on, outside of France.

Benefits of Google Cloud hosting:

Regional flexibility — select hosting closer to your learners or business HQ.

Enterprise security — leverage Google’s advanced cloud infrastructure.

Scalability — elastic resources for large deployments and rapid growth.

Integration — align hosting with existing enterprise Google Cloud setups.

Which Option Should You Choose?

OVH France (included): Best for organisations seeking GDPR compliance and reliable performance out of the box.

Google Cloud (add-on): Recommended for larger organisations or enterprises needing hosting in other global regions, or those with strict IT/security requirements.

How to Enable Custom Hosting

Contact your Coursebox Account Manager or our Support Team.

Let us know your preferred hosting region and add-on requirements.

Our team will guide you through the migration or setup process.

Below is a structured appendix section you can paste after the existing content. It is written to match the tone, structure, and compliance-oriented framing of your current policy.

Additional AI Service Providers & Data Processing

As Coursebox continues to evolve its AI capabilities, we have expanded our use of specialised, enterprise-grade AI services. The following section outlines additional third-party providers used within the Coursebox platform from 2026 onward, including their purpose and data handling considerations.

All providers are selected based on security posture, reliability, and alignment with responsible AI and data protection standards.

1. AI Image Generation – Google Gemini Pro (2025 Onward)

From 2026, Coursebox uses Google Gemini Pro to securely generate AI-powered images within the platform.

Purpose:

Generation of educational illustrations and supporting visual content.

AI-assisted imagery for course materials.

Data Handling:

Image prompts are processed securely via API.

Generated outputs are used solely within the client’s Coursebox environment.

Coursebox does not permit the use of client course content for public model training.

Gemini Pro was selected for its enterprise-grade infrastructure, reliability, and alignment with modern AI governance standards.

2. Text-to-Speech – Microsoft Azure Neural TTS

Coursebox uses Microsoft Azure Text-to-Speech (TTS) services for AI-generated voice narration.

Purpose:

Converting course text into natural-sounding voiceovers.

Supporting accessibility and multilingual learning experiences.

Data Handling:

Text submitted for speech synthesis is processed securely within Azure infrastructure.

Azure OpenAI and Azure Cognitive Services operate under Microsoft’s enterprise data protection commitments.

Data is not used to retrain public models.

This service operates within Microsoft’s secure cloud ecosystem, aligned with our broader Azure infrastructure strategy.

3. Document Image Extraction – Mistral

When users upload documents (including ODF and similar file formats), Coursebox may use Mistral-based models to extract embedded images or structured visual content.

Purpose:

Extracting diagrams and images from uploaded documents.

Supporting AI-driven course conversion workflows.

Data Handling:

Processing is performed via secure API.

Extracted content remains within the client’s Coursebox environment.

Client content is not used to train external public models.

4. AI Video Generation – HeyGen (Exception)

Coursebox integrates with HeyGen via API to enable AI-generated avatar videos within the platform.

Purpose:

Creation of AI avatar instructors.

Text-to-video course presentations.

Personalised learning video content.

Heygen Data handling:

If AI video generation is enabled, content submitted to HeyGen is processed in accordance with HeyGen’s Privacy Policy. HeyGen has formally confirmed in writing (26th Feb 2026) that Coursebox’s Enterprise API account has been fully opted out of model training and removed from all data training pipelines, and this arrangement is now approved and in effect.

Reference:

https://www.heygen.com/privacy

5. Chatbot Infrastructure – Chatbase (Coursebox Support Chatbot)

Coursebox uses Chatbase to power the Coursebox Support AI chatbot functionality to support administrators.

Purpose:

Conversational learner support.

Knowledge base querying.

AI-powered Q&A experiences.

Data Handling:

Based on Chatbase’s published privacy policy, customer data is not used to train public foundation models.

Data is processed to provide chatbot responses within the client’s environment.

Reference:

https://www.chatbase.co/legal/privacy

Clients are encouraged to review Chatbase’s privacy documentation for full transparency.

Coursebox Responsible AI Development Practices and Transparency Policy

Version: 1.0 (Regulatory-referenced edition)

Last updated: 26 February 2026

Owner: Coursebox AI Governance & Security

1. Purpose

Coursebox is an AI-enabled learning management system (LMS) used to create, deliver, and administer learning content. This policy describes Coursebox’s responsible AI development and deployment practices, including governance, risk management, privacy/security controls, and transparency measures for AI-assisted learning content. It is designed to support customer due diligence and align with widely recognised government and standards-body expectations (NIST, 2023; OECD, 2024; UNESCO, 2021). (NIST)

2. Scope

This policy applies to:

Coursebox’s AI-enabled product features (e.g., content generation, tutoring-style interfaces, automation that supports course creation).

Coursebox’s selection and oversight of third‑party AI suppliers used to provide optional functionality.

Transparency controls provided to customers, including white-label (rebranded) deployments.

This policy does not replace a customer’s own legal analysis or compliance obligations; customers remain responsible for configuring Coursebox in accordance with their internal policies and applicable laws. (Industry.gov.au)

3. Responsible AI principles

Coursebox’s Responsible AI approach is guided by internationally recognised principles emphasising human-centricity, safety, transparency, accountability, robustness, and respect for privacy and human rights (OECD, 2024; UNESCO, 2021). (OECD Legal Instruments)

3.1 Fairness and inclusion

We aim to reduce the risk of biased or exclusionary learning outputs by promoting human review of generated materials and supporting customer editing prior to publication.

We design user workflows so customers can validate content for cultural, demographic, and contextual appropriateness before learners see it.

3.2 Transparency and explainability

We aim to make it clear where AI is used in the product experience, especially for interactive AI features and synthetic media (European Commission, 2024; DISR, 2025). (AI Act Service Desk)

3.3 Accountability and governance

We maintain internal governance for AI feature design, supplier risk review, and incident response, consistent with recognised AI risk management frameworks (NIST, 2023; ISO/IEC, 2023a; ISO/IEC, 2023b). (NIST)

3.4 Safety, robustness, and security

We implement security controls to protect customer data and reduce misuse risks (e.g., access control, monitoring, and review pathways for higher-risk outputs).

We continuously improve controls as AI capabilities evolve (NIST, 2023; NIST, 2024). (NIST)

4. Governance structure and lifecycle controls

Coursebox operates AI governance across the AI feature lifecycle (design → testing → deployment → monitoring → improvement) in line with risk management best practices (NIST, 2023; NIST, 2024). (NIST)

4.1 Risk assessment and change management

We assess AI features for likely harms (e.g., inaccurate content, harmful stereotypes, unsafe instructions, impersonation/synthetic media risks).

We apply change controls for material AI feature updates, including supplier changes, model/provider changes, and new high-impact features.

4.2 Human oversight

Coursebox is designed so that AI-generated course materials can be reviewed and edited by the course author/admin prior to publication (human-in-the-loop publishing).

For interactive AI experiences (e.g., tutor-like chat), we emphasise transparency and appropriate user-facing cues (European Commission, 2024; OAIC, 2025). (AI Act Service Desk)

4.3 Monitoring, issue reporting, and remediation

We maintain channels for customers to report AI quality/safety issues.

Where appropriate, we update prompts, filters, workflows, or supplier configurations to reduce recurrence.

4.4 Documentation and recordkeeping

We maintain internal documentation proportionate to feature risk and support customers with documentation needed for procurement and governance reviews, aligning with emerging expectations for traceability and recordkeeping (DISR, 2025; NIST, 2023). (Industry.gov.au)

5. Data protection, privacy, and training use

5.1 Customer data and AI training

Coursebox’s policy is that customer content and learner data are not used to train or fine‑tune Coursebox models unless explicitly agreed in writing for a defined purpose, with appropriate safeguards.

5.2 Use of commercial AI services

Coursebox may use commercial AI services to provide AI capabilities. As relevant examples of supplier positions:

Microsoft states that Azure OpenAI does not use customer data to retrain models (Microsoft, 2025). (Microsoft Learn)

Google documentation for Gemini for Google Cloud indicates prompts/responses are not used to train the model, and includes data governance detail (Google Cloud, 2026). (Google Cloud Documentation)

For optional AI video creation using HeyGen, HeyGen’s privacy materials indicate users may have rights to object/opt out of information being used to train models by contacting their privacy address, and HeyGen also describes opt‑out and enterprise-related positions in its GDPR materials (HeyGen, 2025–2026). (HeyGen) HeyGen has formally confirmed in writing (26th Feb 2026) that Coursebox’s Enterprise API account has been fully opted out of model training and removed from all data training pipelines, and this arrangement is now approved and in effect.

Note: Supplier terms and controls can change; Coursebox reviews material supplier changes and can disable optional features where customer policy requires it.

5.3 Transparency in privacy notices

Where Coursebox functionality involves personal information, privacy obligations apply. Australian privacy guidance recommends clear and transparent information about AI use and clearly identifying public-facing AI tools such as chatbots (OAIC, 2025). (OAIC)

6. Transparency to learners and AI-generated content disclosures

This section addresses a common governance question for LMS and white-label deployments: Do customers need to inform learners that course text/images/videos may be AI-generated or AI-assisted?

6.1 What is commonly expected (baseline)

Across major frameworks, transparency is a consistent expectation, even where legal mandates differ. For example:

Australia’s Voluntary AI Safety Standard explicitly calls on organisations to “inform end-users” and “disclose when… generating content using AI” (DISR, 2025). (Industry.gov.au)

The EU AI Act includes transparency obligations for interactive AI systems and for certain synthetic/deepfake content (European Commission, 2024). (AI Act Service Desk)

The US approach is more framework- and enforcement-led: NIST provides voluntary risk management guidance (NIST, 2023; NIST, 2024), and the FTC has taken actions where AI is used to enable deception or unfair practices (FTC, 2024). (NIST)

6.2 European Union: when disclosure is required (and when it is not)

Under Article 50 of the EU AI Act:

Interactive AI: Providers must ensure that AI systems intended to interact directly with people inform them they are interacting with an AI system (unless obvious) (European Commission, 2024). (AI Act Service Desk) Action: for AI grading, quiz open answer and tutoring or any other AI interactions, from August 2nd 2026, we will enable a contextualised AI note for users.

Machine-readable marking: Providers of AI systems generating synthetic audio/image/video/text content must ensure outputs are marked in a machine-readable format and detectable as artificially generated/manipulated (European Commission, 2024). (AI Act Service Desk) Action: from August 2nd 2026, we will enable a machine readable markers.

Deepfakes (image/audio/video): Deployers must disclose deepfake content as artificially generated/manipulated, with limited exceptions (European Commission, 2024). (AI Act Service Desk) Action: for AI videos generated from August 2nd 2026, we will enable a contextualised AI note for users.

AI-generated text for public-interest publication: A disclosure duty is specified for text published to inform the public on matters of public interest, with an exception where there is human review/editorial control and editorial responsibility (European Commission, 2024). (AI Act Service Desk) Action: for any text or images generated by AI prior to publication by a course admin, learners are notified via the signup terms and conditions of use.

Timing: The European Commission has stated that the transparency rules for AI-generated content become applicable on 2 August 2026 (European Commission, 2025). (Digital Strategy)

Practical implication for Coursebox customers (EU):

If a customer uses AI features that interact with learners (e.g., an AI tutor/chat experience), learners should be informed they are interacting with AI (European Commission, 2024). (AI Act Service Desk)

If a customer uses AI-generated video that could constitute a “deep fake” (e.g., realistic avatar video resembling a real person), the deployer/customer should plan to provide an appropriate disclosure to learners in EU contexts (European Commission, 2024). (AI Act Service Desk)

For ordinary internal training text that is not “published to inform the public on matters of public interest,” Article 50’s specific text-publication disclosure trigger may not apply; nonetheless transparency remains a best practice expectation and may be required under other applicable laws/policies depending on context. (AI Act Service Desk)

6.3 Australia: legal requirement vs. governance expectation

Australia currently emphasises governance expectations via:

The Voluntary AI Safety Standard, which explicitly recommends disclosure to end-users about AI interactions and AI-generated content (DISR, 2025). (Industry.gov.au)

OAIC privacy guidance recommending clear statements about AI use in privacy policies/notifications and identifying public-facing AI tools like chatbots (OAIC, 2025). (OAIC)

Practical implication (Australia):

Even where a specific statutory “AI label” is not mandated for internal training materials, disclosure is a strong governance expectation and reduces trust and misrepresentation risk—especially for AI tutor/chat interactions and synthetic video/avatar content. (Industry.gov.au)

6.4 United States: disclosure is usually risk-based (not a single universal rule)

In the US, expectations relevant to this question are typically driven by:

Voluntary frameworks (e.g., NIST AI RMF and the NIST Generative AI Profile) that emphasise managing risks such as information integrity, harmful content, and transparency needs (NIST, 2023; NIST, 2024). (NIST)

Consumer protection enforcement: the FTC has pursued actions where AI is used to “turbocharge deception” and unfair practices (FTC, 2024). (Federal Trade Commission)

Practical implication (US):

For enterprise training content, disclosure is typically not a universal statutory requirement, but it can be important where failing to disclose could mislead learners about the nature or source of content (FTC, 2023; FTC, 2024). (Federal Trade Commission)

7. SCORM, LTI and API Deployments

Where Coursebox content is exported or delivered via SCORM wrapper, LTI, iframe, or API into a third-party LMS or learning environment, learners may not see or accept Coursebox’s User Terms or Privacy Policy. In such cases, the responsibility for providing any required AI transparency or disclosure rests with the course publisher or deploying organisation, which controls the learner-facing environment. Accordingly, where applicable law or governance standards require transparency, the publisher should notify learners that certain course materials, images, video, or interactive elements may have been generated or assisted by artificial intelligence.

8. Continuous improvement and external standards alignment

Coursebox continuously improves its Responsible AI controls and references:

NIST AI RMF and NIST’s GenAI risk guidance (NIST, 2023; NIST, 2024). (NIST)

ISO/IEC AI management and risk standards as they are adopted by industry (ISO/IEC, 2023a; ISO/IEC, 2023b). (ISO)

OECD and UNESCO principles for trustworthy AI (OECD, 2024; UNESCO, 2021). (OECD Legal Instruments)

EU AI Office guidance and voluntary Codes of Practice as they evolve toward application of Article 50 transparency obligations (European Commission, 2025). (Digital Strategy)

9. Governance and Oversight Assurance

Coursebox maintains internal governance controls to ensure AI capabilities are deployed in a secure, risk-managed, and accountable manner.

AI feature development and integration decisions consider:

Operational and security risk

Regulatory requirements

Contractual obligations

AI functionality operates within defined technical and access control boundaries consistent with Coursebox’s information security framework.

Oversight includes:

Periodic review of AI feature performance

Monitoring of regulatory developments

Review of material changes affecting AI integrations

Policy updates where required

Customers retain control over AI-enabled features within their environment, and optional capabilities may be enabled or disabled based on organisational requirements.

This section complements, and does not replace, the commitments set out in Sections 1–8.

10. Third-Party AI Service Governance

Third-party AI providers are subject to due diligence and ongoing oversight prior to and following integration.

Providers are evaluated against:

Data protection and regulatory alignment

Secure API-based processing

Enterprise-grade infrastructure and resilience

Transparent privacy documentation

Appropriate contractual safeguards

Coursebox monitors material changes to provider terms or data handling practices and may adjust configurations, restrict functionality, or suspend integrations where necessary.

Client Control & Feature Configuration

Certain AI features are optional and may be configured or disabled based on organisational compliance needs. Clients may configure:

AI feature access controls

Hosting preferences

Data processing boundaries

Available provider opt-out mechanisms

Coursebox retains the authority to restrict or suspend AI integrations where required for legal, security, or compliance reasons.

References

DISR (Department of Industry, Science and Resources) (2025) The 10 guardrails | Voluntary AI Safety Standard. Available at: https://www.industry.gov.au/publications/voluntary-ai-safety-standard/10-guardrails (Accessed: 26 February 2026).

European Commission (2024) Article 50: Transparency obligations for providers and deployers of certain AI systems (AI Act Service Desk). Available at: https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-50 (Accessed: 26 February 2026).

European Commission (2025) Commission publishes first draft of Code of Practice on marking and labelling of AI-generated content. Available at: https://digital-strategy.ec.europa.eu/en/news/commission-publishes-first-draft-code-practice-marking-and-labelling-ai-generated-content (Accessed: 26 February 2026).

European Commission (2025) Code of Practice on marking and labelling of AI-generated content. Available at: https://digital-strategy.ec.europa.eu/en/policies/code-practice-ai-generated-content (Accessed: 26 February 2026).

FTC (Federal Trade Commission) (2023) Chatbots, deepfakes, and voice clones: AI deception for sale. Available at: https://www.ftc.gov/business-guidance/blog/2023/03/chatbots-deepfakes-voice-clones-ai-deception-sale (Accessed: 26 February 2026).

FTC (Federal Trade Commission) (2024) FTC Announces Crackdown on Deceptive AI Claims and Schemes (Operation AI Comply). Available at: https://www.ftc.gov/news-events/news/press-releases/2024/09/ftc-announces-crackdown-deceptive-ai-claims-schemes (Accessed: 26 February 2026).

FTC (Federal Trade Commission) (2024) AI Companies: Uphold Your Privacy and Confidentiality Commitments. Available at: https://www.ftc.gov/policy/advocacy-research/tech-at-ftc/2024/01/ai-companies-uphold-your-privacy-confidentiality-commitments (Accessed: 26 February 2026).

Google Cloud (2026) How Gemini for Google Cloud uses your data (Data governance). Available at: https://docs.cloud.google.com/gemini/docs/discover/data-governance (Accessed: 26 February 2026).

Google Cloud (2026) View Gemini for Google Cloud logs. Available at: https://docs.cloud.google.com/gemini/docs/log-gemini (Accessed: 26 February 2026).

HeyGen (2025–2026) Privacy Policy. Available at: https://www.heygen.com/privacy (Accessed: 26 February 2026).

HeyGen (2024–2026) GDPR Compliance. Available at: https://www.heygen.com/gdpr-compliant (Accessed: 26 February 2026).

ISO/IEC (2023a) ISO/IEC 42001 — Artificial intelligence management system. Available at: https://www.iso.org/standard/81230.html (Accessed: 26 February 2026).

ISO/IEC (2023b) ISO/IEC 23894:2023 — Artificial intelligence — Guidance on risk management. Available at: https://webstore.ansi.org/standards/iso/isoiec238942023 (Accessed: 26 February 2026).

Microsoft (2025) Azure OpenAI frequently asked questions (Data and Privacy). Available at: https://learn.microsoft.com/en-us/azure/ai-foundry/openai/faq?view=foundry-classic (Accessed: 26 February 2026).

Microsoft (2025) Data, privacy, and security for Azure Direct Models in Microsoft Foundry (includes Azure OpenAI models). Available at: https://learn.microsoft.com/en-us/azure/ai-foundry/responsible-ai/openai/data-privacy?view=foundry-classic (Accessed: 26 February 2026).

NIST (National Institute of Standards and Technology) (2023) AI Risk Management Framework (AI RMF 1.0) (NIST AI 100-1). Available at: https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf (Accessed: 26 February 2026).

NIST (2024) Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile (NIST AI 600-1). Available at: https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf (Accessed: 26 February 2026).

OAIC (Office of the Australian Information Commissioner) (2025) Guidance on privacy and the use of commercially available AI products. Available at: https://www.oaic.gov.au/privacy/privacy-guidance-for-organisations-and-government-agencies/guidance-on-privacy-and-the-use-of-commercially-available-ai-products (Accessed: 26 February 2026).

OECD (2024) Recommendation of the Council on Artificial Intelligence (OECD/LEGAL/0449) (amended 03/05/2024). Available at: https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449 (Accessed: 26 February 2026).

UNESCO (2021) Recommendation on the Ethics of Artificial Intelligence. Available at: https://www.unesco.org/en/artificial-intelligence/recommendation-ethics (Accessed: 26 February 2026).

Was this article helpful?

That’s Great!

Thank you for your feedback

Sorry! We couldn't be helpful

Thank you for your feedback

Feedback sent

We appreciate your effort and will try to fix the article